In December 2016, I was approached by Toby Kurien (an engineer at IBM Research | Africa in South Africa Lab, located in the Tshimologong precinct) and asked if I would be interested in building a Raspberry Pi cluster at the lab for them. The system would consist of 13 Raspberry Pi 3 Model B SBC’s which would drive a series of 12 computer monitors in a video wall, with the 13th Pi acting as the master/controlling node of the system.

This blog post will attempt to list every step that was taken during the build of the system, what obstacles were encountered, and how I overcame (most) of them.

Toby heard about the Pi Cluster I had built previously for one of my assignments at Wits University (via the JCSE’s CPD Programme), and thought I could bring the experience I gained during that project into the build required by IBM Research | Africa.

I jumped at the opportunity!

What a privilege to be able to work alongside a truly progressive endeavour like IBM Research | Africa. I accepted the offer and started working on a plan straight away.

The Requirements

The Raspberry Pis needed to drive the 12 smaller monitors in this video wall:

At the time this picture was taken, the monitors were already hooked up to the main feed driving the bigger panel screens, so they were just duplicating content already showing, just in various display configurations. The idea behind having independent controllers (i.e.: a Raspberry Pi) for each monitor is so that when IBM’s researchers want to display content around their research, they can independently drop small, factual content to each of the independent screens as a side note to the main presentation happening in the centre display.

The requirements list:

- The existing computer system used to drive the video wall is housed inside a rack-mount server cabinet (off picture, to the right), so the Raspberry Pi cluster would need to fit into the existing cabinet to keep everything in close proximity to each other.

- The cluster would need its own network infrastructure, and not be connected to the existing IBM network within the building. One port would need to be made available for remote access should the need arise.

- The master Pi’s SD card should be easily accessible in case of data corruption and an image needs to be restored over it.

- The master Pi’s USB connections should be made available via the chassis’ front panel, so plugging in USB drives or keyboards and mice would not require opening the chassis.

- The master Pi should illuminate the POWER ON LED when the system is powered on, to indicate that there is indeed power going through to it.

- The master Pi should illuminate the HDD LED when there is activity on the Pi, to indicate that the system has not hung and that work is actually being performed.

- The system should have 12 HDMI female output connectors on the back of the chassis so they can individually display their content on each of the screens respectively.

- All the Raspberry Pis need to turn on at the same time from one power switch, and from one power source.

- All the Raspberry Pis need to be reset-able via a single reset switch, in case the entire system hangs or needs to boot again after a software power-down command.

- An LCD touch panel needs to be installed at the front of the chassis so an operator can interact with the master Pi without having to log in remotely via the network.

- The end product needs to look neat, clean and completed in a professional manner.

As you can see from the list above, I had a fair amount of work set out for me.

Next, I started acquiring all the hardware and components required to turn the above requirements into reality.

The Hardware

First on the list was getting all the Raspberry Pis. I ended up ordering them from The Pi Shop, a local South African supplier, as their price was the best I could find and they had ample stock available. I also ordered the heat-sinks from the same supplier (visible to the left of the bottom left Pi).

I then got 13x 8GB SanDisk Ultra SD cards from FirstShop, also a local supplier. The Pis did not require acres of storage space, as they were going to play their media across the network from the master Pi, which would in turn have the media on a removable USB drive.

From there, I ordered:

- 13x HDMI male to female panel mount cables

- 1x panel mount ethernet cable

- 1x ten pack of SIL 40 way connectors

- 1x 5″ HDMI LCD touch screen

from Micro Robotics, also a local supplier.

Next was the chassis. I opted for a 2U rack-mount version, as that was sufficiently large enough to house all the components, cables and the required power supply. I went with a generic 2U chassis from Starsun Import & Export, a local supplier. The chassis met all the requirements with regards to front panel accessible USB ports, power LED and HDD LED, power switch and reset switch. The panels where optical drives would normally be installed (left hand side of the chassis) looked like an ideal place to mount the 5″ LCD touch panel. The half-height PCI brackets at the back of the chassis also seemed to be a great place to mount the HDMI panel mount connectors.

A power supply capable of delivering 5V with enough amps to each of the Raspberry Pis (at least 1A when under 100% CPU load) would be required, as well as power the network switch and cooling fans within the chassis. Having built my previous Pi cluster using an ATX power supply, I decided to do the same with this build as the procedure to modify the power supply to deliver exactly what you need is quite straight forward, and has been done many times by various other builders on the web. Also, the ATX power supply would fit perfectly inside the 2U rack-mount chassis, as there is already a mounting bracket inside the chassis to hold it in place. I selected the Corsair CX430 ATX power supply, as the price-point was right for the requirements, as well as for the reliability of a 80 PLUS bronze certified device. It is rated as being able to deliver 20A on the 5V rail, and 32A on the 12V rail. This was bought from Frontosa, a local computer components importer and reseller.

In order to reliably deliver over 1A of current, you need the correct gauge wire which is capable of handling that amount of power. The official Raspberry Pi 3 power supply is capable of delivering 2.5A, and as such, uses 18 AWG wire to deliver that current to the Raspberry Pi. 18 AWG wire equates to around 1mm thick wire, preferably multi-strand copper wire. In order to do the same with the ATX power supply, I sourced 10 metres of red and black coloured, 1mm thick wire from my local auto spares shop. Most standard USB cables only handle 500mA of current, which is insufficient to power a Raspberry Pi 3 when under 100% CPU load. Most of those cables use 24 AWG wire which is rated to deliver a maximum of 577mA.

For the network switch, I opted to go for the D-Link DES-1016A 16-port Fast Ethernet Switch. Its form-factor was small enough to fit nicely inside the 2U rack-mount chassis, when taken out of its protective plastic housing. It requires 5V at 1A, so can be powered by the same power supply as the Raspberry Pis. This was sourced from Rectron, a local computer components importer and reseller. Packets of RJ-45 connectors were also purchased from Rectron so custom length network cables could be made when required.

A variety of standoffs, nuts and screws were bought from Mantech and Communica, local electronic components resellers. 10 metres of CAT5e ethernet cable was purchased from a local computer shop.

The Build

The designs

I got the go-ahead to start the build a week before Christmas 2016, so many of the suppliers were either already closed or had none of the stock I needed. I decided to use the time to start designing the custom low profile PCI brackets required to house the panel mount HDMI connectors, as well as design the ATX form-factor base plates, cover plate, network switch plates and master Pi plates.

The software I used to design all of the brackets and plates is a cloud-based, in-browser application called Onshape. It’s free and relatively fast, but you’re only allowed to make publicly accessible designs when using a free account. All my designs can be viewed here. (Sign up is free and required to view the designs)

The low profile PCI brackets were to be 3D printed, as this seemed like the perfect thing for a 3D printer to do 🙂

Driven Alliance kindly offered the use of their Ultimaker 2 3D printer, where a few prototypes were printed and adjusted according to the dimensions of the HDMI panel mount headers, the space between the panel mount connectors, screw hole spacing and HDMI plug shape. The prototypes were printed using Formfutura’s CarbonFil filament, a carbon-fibre based plastic that’s super tough, warp resistant and just generally cool. A couple of the brackets were also printed at IBM’s Research Lab using a Morgan Pro 2 3D printer. The prints from that machine are insanely good, and influenced me to invest in one myself. But that’s a story for another time.

Once I was happy with the final design, a set of 9 brackets (3 spares) were printed all at once, filed down and cleaned up, spray painted black and had the HDMI connectors plugged in and screwed into their final positions.

Next was the design of the ATX form-factor plates which would allow me to house a set of Raspberry Pis inside a standard computer chassis. Luckily, there’s a wealth of information out there on ATX motherboard specifications, dimensions, hole placements and diameters, etc. Being able to draw up the initial plate was relatively quick, thanks to the detailed designs available on the internet. Once, I had the base plate, I started adding cut-outs and mounting holes specifically for the Raspberry Pis. The image below depicts what the purpose of each cut-out is for.

Two of these plates were made, each holding 6 Raspberry Pis.

I then set about designing the top ATX cover plate, whose purpose is nothing more than adding a nice finishing touch to the build (but I could also argue that the cut-outs could act as cooling vents). I used the IBM logo on the top, which I imported off an SVG file from Wikipedia, as well as the Driven Alliance logo.

The master Pi plates were designed to house the controlling node at the front of the chassis, so it could be easily accessed if need be, as well as provide the best WiFi signal if we decide to access the unit wirelessly. The cut-outs for the Pi’s electrical and networking wiring are identical to the base plates for the slaves. The top cover plate was, once again, purely to add a nice finishing touch.

The networking switch plates are very basic, with holes for mounting into the chassis, holes to hold the switch in place, and a cover plate, you guessed it, to add a nice finishing touch.

Hacking the power supply

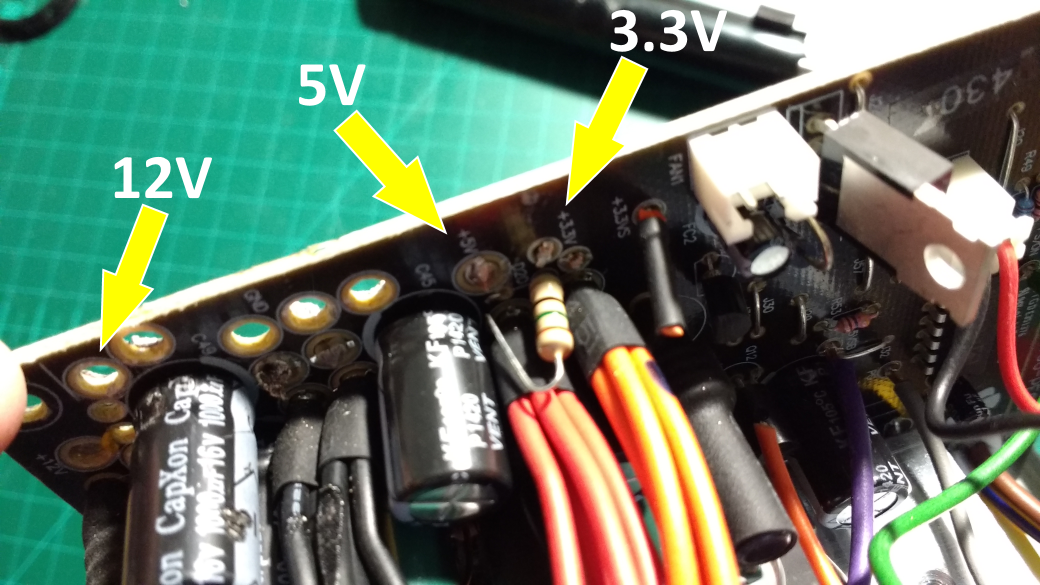

Unfortunately, I didn’t take many pictures of this while I was working on the power supply. There are many online videos that show you how to modify the power supply to deliver the voltage you’re after. Inside the ATX power supply, there are 3 rails each delivering different amounts of voltage. Namely, 3.3V, 5V and 12V. The 3.3V wires are orange in colour, the 5V wires are red and the 12V wires are yellow. The black wires are ground. The only other wire we’re interested in that isn’t in the previously mentioned list is the green wire, which is the Power On wire that tells the ATX power supply to power up when shorted.

When opening the power supply, you’ll see the orange, red and yellow wires grouped together, leading into each rail supplying the specified voltages. I removed the 3.3V (orange) wires completely by un-soldering them from the PCB, as there was no use for them in this build. The existing 5V (red) wires were also removed and replaced with the 18 AWG wire I had bought from the auto spares shop. The original 5V wires in the power supply were already 18 AWG, but were the incorrect length required for this build. You want to minimise the number of joins, solders and connectors when dealing with power lines, as each join/connection potentially adds more resistance to the line, dropping the amount of voltage that can run through it. I learnt this the hard way with my previous Pi cluster build. I removed all but two of the 12V (yellow) wires, as I wanted to use these to power the master Pi and network switch independently of the slaves. In order to step the voltage down from 12V to 5V, I installed two adjustable buck converters that I sourced from banggood.com. They’re tiny, incredibly efficient and both fitted nicely inside a little enclosure at the back of the master Pi’s plate. As with the 5V (red) wires, I removed all the ground (black) wires and replaced them with the wire I got from the auto spares shop, as I made them the correct length required for this build. The ATX Power On (green) wire and a ground wire were put together into a DuPont connector so it could be wired up to the chassis’ power switch. The remaining wires, -12V (blue), grey (power good) and 3.3V sense (brown) were all cut short and insulated inside the power supply housing.

The power leads for all the Pis were put into female DuPont connectors, as this was how the Pis were going to be powered via the header pins (pins 4 and 6), not via the micro-USB connector. The reason for this was due to space constraints within the chassis.

Making the entire system re-settable

To reset all the Raspberry Pis via the front panel RESET switch, every single Pi needed to have reset pins soldered into the RUN mounting holes near the back of the GPIO pins, by the USB connectors. This is no small feat, as the surface area for contact when trying to solder the pins into place is incredibly small. Multiple attempts were made to make them work correctly, and I finally got it right about halfway through the batch of 13 Pis. I re-soldered the first half of the batch again using my newly learnt technique, and they all ended up soldered correctly.

Once that was done, I started making the wiring loom to connect all the reset pins to the RESET switch on the front of the 2U chassis. I bought two reels of 24 AWG wire, blue and white, measured the lengths I would need to reach all the Pis, housed each connection in female DuPont connectors, soldered the wires together at one junction with one lead going to the RESET switch via a male DuPont connector. I tested that all the connections were good by placing LEDs into each of the connectors and supplying enough voltage to illuminate them.

Modifying the chassis switch panel

By default, chassis’ that house ATX power supplies usually have something called “momentary” power switches that “close” a circuit while the button is depressed. As soon as you let go of the button, the circuit “opens” again, stopping current from flowing. What normally happens is that ATX based motherboards take over the responsibility of keeping the switch “closed”, so the system remains powered on until a power off command is issued and the ATX motherboard releases the hold on power.

In this build, we don’t have such a mechanism that ATX motherboards provide, so I had to modify the switch panel by replacing the momentary switch with a latching switch. The switch latches into a position depending on which state it is in. If the latch is all the way up, the connection is open, preventing current from flowing. When the latch is down, it latches into a permanent “on” state, closing the circuit and allowing current to flow. Pressing the switch again reopens the circuit.

The wiring loom of the chassis switch panel was shortened as the wires for the Power ON LED, HDD LED, power switch and reset switch, as well as the two USB connectors were all going to connect to the master Pi, and the power and reset wiring connections mentioned previously. Two USB-A male connector leads were purchased from Communica and soldered onto the wires coming from the front panel of the chassis. These were then connected to the master Pi and tested for connectivity.

The Power ON LED was connected to the 3.3V pin (pin 1) and ground (pin 9) and the HDD LED was connected to pins 12 and 14 (GPIO 18 and ground) on the master Pi.

Making the network cables

Space was a big concern with this build as we were packing 13 Raspberry Pis, 12 HDMI panel mount connectors and a network switch all into one chassis. This leaves very little breathing room for wiring, whether it be power, function (reset) or networking. The standard plastic sheathing around standard CAT5 UTP ethernet cables have a lot of air in them, taking up space. I came up with the idea of removing the plastic sheathing on all 13 ethernet cables and housing them in heat-shrink. Once the heat-shrink had been applied with enough heat, it shrunk the amount of space a standard ethernet cable needs when running through wiring conduits. This helped me save on space, made the cables look black and just generally made the wiring look more appealing. Form and function!

Putting it all together

This was the hardest part of the build. No matter how many times you measure, something always comes up short. An example of this would be the first re-settable wiring loom I made. It was far too short and I ended up having to make another, longer version. An even more extreme version of this would be the ATX plates I made. I hadn’t considered the length of the HDMI headers that plug into the Raspberry Pis. I needed an extra 60mm of space between the Raspberry Pis for the HDMI cables to fit, so the first batch of ATX plates were placed to the side and new ones with the modified dimensions were created. Luckily, having Perspex laser cut and supplied isn’t that expensive, so it wasn’t too disastrous.

After many, many hours of manoeuvring wires, mounting holes, feeding ethernet and power cables through the tiny cut-outs I had designed, it all magically fitted together.

Creating the front LED touch panel mount

With all the main parts of the cluster now in place, it was time to make the front LED touch panel mount for the master Pi. The SD card and network port of the master Pi had to be accessible from the front of the unit, so any required maintenance can be done without having to open the entire unit.

The finished product! IBM logo redacted due to legal/copyright restrictions

The software

Raspbian Jessie with the Pixel Desktop operating system was chosen as the platform to install on these Raspberry Pis. The January 2017 release was installed on the master Pi and one slave node. Once the slave node was up and running, the SD card of the slave was cloned to all the other SD cards and then subsequently had each of their /etc/hostname and /etc/hosts files modified to have unique names. dnsmasq was installed on the master Pi so it could act as both a DNS and DHCP server.

I managed to break one of the SD cards during the installation process, so I donated one of my 32GB Samsung EVO SD cards for use in the master Pi, letting the previous 8GB SD card act as a replacement in one of the slaves.

Hooking it up at IBM

We installed the system into the rack-mount server cabinet, plugged in the HDMI cables, turned the system on and …

It worked!

Some configuration was required to make four of the screens rotate at 90 degrees, as that is how they were installed in the video wall, and to set the resolution to Full HD (1920 x 1080), but other than that, it all worked flawlessly. Toby modified the devices so the IBM Research Lab logo displays as the splash screen while Pixel Desktop boots up as well as setting the backgrounds to show the IBM Research logo. He also reconfigured the Pis naming conventions and IP’s according to which screen each one displayed on. So “slave01” would display on screen 1, “slave02” on screen 2, and so on.

Conclusion

I felt this was a project that really pushed me to do the best work I could possibly do, even though I had never attempted anything at this scale before. I’d like to thank Toby Kurien for his assistance and patience during the making of this system.

If you found this post to be valuable, please leave a comment and I will respond.

G.

Great Work. Thies is a nice idea

Thank you Jan, building it was a great experience 🙂

Epic build! Very impressive, and great build quality.

Awesome!! Very nice job in 3D printing the brackets.

The ATX hack is smart for a powersupply.

I like the end result verry much. Good job!

Brilliantly done Mr G! Nothing short of impressive!